How are regulators thinking about AI in medicines regulation?

In 2021 the International Coalition of Medicines Regulatory Authorities (ICMRA) published a one-of-a-kind report on the use of AI to develop medicines.

07.06.2022

First published in our Biotech Review of the year – issue 9.

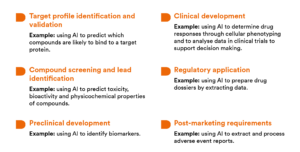

The ICMRA report comes at a time when AI technologies are being increasingly applied in the development of medicines, which brings unique challenges to the existing medicines and medical devices regulatory frameworks. There is potential for AI to be applied throughout the entire lifecycle of a medicine, all the way from target identification to post-marketing, and regulators across the world are getting together to ensure harmonisation around the standards and practices to be applied to the use of AI in medicines regulation.

The report concludes with an array of detailed recommendations for regulators and stakeholders on how to address these challenges. Many of these recommendations result from stress-testing of the existing frameworks through two hypothetical case studies set out in the report.

General recommendations

A central theme that runs through the general recommendations put forward in the report is that of harmonisation. In broad terms, the report proposes to achieve increased harmonisation of approaches to AI in the context of medicines regulation by establishing an infrastructure to facilitate better communication and the sharing of ideas and experiences relating to AI and medicines regulation.

The report suggests sharing medicine developers’ experiences of regulating the use of AI by establishing a permanent ICMRA working group dedicated to AI or by introducing AI as a standing ICMRA agenda item. This would also allow best practices to be shared within the ICMRA member agencies. There is also a suggestion that international guidance on AI should be drawn up and could cover the development and use of AI in the context of medicinal products.

Regulators are encouraged to engage with existing ethics committee networks and AI expert groups to collaborate on the ethical issues of AI in the development, use and regulation of medicines. Collaboration among regulatory authorities in the medicines and medical devices sectors is promoted, with the aim of encouraging early interactions with developers in order to harmonise opinions on AI in specific medicines contexts.

A risk-based approach to assessing and regulating AI is also advocated. This chimes with other recent developments in AI-focussed legislation, such as the European Commission’s draft proposal for a Regulation on a European Approach for AI[1]. The Commission’s draft proposal contains requirements and standards for AI systems regarded as “high risk” (all of which will need to be licensed) and there is an emphasis on categorising the AI system according to its risk profile.

The report acknowledges some of the challenges of scientifically and clinically validating the use of AI, such as access to the underlying algorithms and datasets. To validate the use of AI, the AI must be sufficiently understandable and the regulator must have access to the algorithms and datasets that shape the functionality of the AI system. However, this could require changes to the legislation to ensure this access is facilitated.

In our view, these are logical and achievable recommendations as AI will clearly impact medicines regulation in many different, cross-sectoral ways and therefore it is important that AI is not considered in isolation or only in the context of specific parts of medicines regulation. A more concerted and holistic approach to regulation will ultimately always be beneficial to patients and will accelerate the availability of novel treatments and technologies. At present, for example, there is limited collaboration between the medicines and medical devices regulators when approving drug-device combination products (such as inhalers) which can lead to challenges for developers.

How would it work in practice? Two case studies

In the framework of adapting regulatory frameworks to facilitate safe and timely access to innovative medicines, the ICMRA used horizon scanning to identify challenging topics and develop hypothetical case studies to stress-test existing regulatory frameworks and develop recommendations to adapt them. Two case studies were developed by ICMRA members: the examples were then used to ‘stress-test’ the regulatory systems to discover the areas where change may be needed.

The first case study focusses on the application of AI in multiple stages in development of a medicine, from pre-trial to post-approval, and involves the use of an AI software as a medical device (SaMD) in the form of a Central Nervous System (CNS) App. The theoretical CNS App would record and analyse baseline disease status and diagnosis for selecting patients to participate in a clinical trial. It would also monitor disease status, adherence and response to therapies in both clinical trial and post-approval settings. The CNS App would provide personalised dosage advice post-approval. The report uses this hypothetical case study to identify further specific recommendations related to the use of AI SaMD in combination with medicines, including specific recommendations for the EU.

The report recommends that sponsors, developers and pharmaceutical companies strengthen governance structures to oversee algorithms and AI Apps that are closely linked to the benefit/risk ratio of a medicinal product. The report states that a multi-disciplinary oversight committee should be in place during product development to understand and manage potential consequences of high-risk AI systems and that healthcare professionals should be involved at an early stage and informed of how the AI system is monitoring patients and influencing medicine use.

There are further recommendations for agreeing regulatory guidelines for the development of AI systems in this context. The report sets out many areas that such guidelines should address, including:

- Data quality: guidelines should define data provenance, ensure data are standardised and that sources of data are authenticated. Guidelines should also set out an external validation process.

- Quality assurance: guidelines should describe processes for ensuring reliability and error detection. These processes must consider the integrity of the data and how it is acquired. The guidelines should also cover “chain of custody”. As AI systems can adapt and change without direct external stimulus (for example through the processing of additional datasets or the analysis of patient outcomes resulting from the AI’s decisions) it is important to capture a record of how and why the system is changing. The report recommends that guidelines should provide that AI systems must guarantee the lineage and flow of data, tracking of modifications and the ability to detect anomalies to ensure accountability and transparency.

- SaMD: existing medical device guidelines related to SaMD could be further developed to cover algorithm transparency, comprehensibility, validity and reliability.

- Ethical principles: guidelines should consider whether AI SaMD used in combination with medicines should adhere to the principles of necessity (only collecting data needed for purpose of the product), proportionality (only collecting as much data as needed) and subsidiarity (using the least sensitive data when possible).

- Real world performance: guidelines are needed to develop a system to monitor the real world performance of AI-based software.

The specific recommendations for the EU overlap with some of the recommendations detailed above, such as establishing clear mechanisms for regulatory cooperation between medicines and medical device competent authorities. However there are additional EU recommendations of interest, for example it is put forward that regulatory agencies could offer themselves as a trusted party and regulatory data custodian in order to secure access to the sensitive data underpinning marketing authorisation applications that cannot be shared with others. The EU-specific recommendations also recognise the potential for post-authorisation problems and it is suggested that the framework for marketing authorisation variations may need to be adapted to accommodate updates to AI software linked to medicinal products.

The report’s second case study relates to AI and pharmacovigilance: fulfilling pharmacovigilance obligations via an AI system, screening both literature and signals. In the hypothetical case, machine learning methods were trained by pharmacovigilance specialists using a large existing bibliographic and signal training dataset. A dictionary of signal search terms was built for investigational medicinal substances, indications and adverse events. The information was obtained by the software through checking publications and signals, which were filtered and ranked by relevance. A comparison was carried out between the results obtained through manual vs machine search. The machine had 100% sensitivity and routinely identified >4 times the number of relevant signals. The machine learning algorithms were tested with several further datasets exhibiting a similar sensitivity and specificity, beyond human screening results.

This example is a very important one as current signal detection and management tools have a heavy manual component that may be hard to sustain in the future, partly due to the growing data from increased adverse drug reactions reporting worldwide. AI systems appear suitable for the detection of safety signals, but the report concludes that finding the right balance between AI and human oversight of signal detection and management will be crucial.

What next?

As the use of AI increases across all stages of a medicine’s lifecycle, both regulators and the industry will require specialist expertise in AI. While the report suggests the introduction of the concept of a qualified person responsible for AI and algorithm oversight compliance, expertise will also be required on the regulators’ side in order to create the necessary standards and the legal and regulatory framework that will ensure harmonisation, reliability and compliance. This of course opens the door to exciting new areas of expertise, as specialists in this field will be in high demand. There is a risk, though, of a lack of skilled personnel to create, understand and operate these AI-based platforms.

All in all, the advancement of AI in medicines development is exciting, as opportunities for AI to benefit researchers, developers and, ultimately, patients are increasing rapidly. The ICMRA has taken the first steps of what will no doubt be a long journey before the recommendations in the report make their way into the legal and regulatory frameworks. We hope this happens sooner rather than later so that access to safe medicines is accelerated without undermining the principles that govern the regulation of medicines.

[1] https://eur-lex.europa.eu/legal-content/EN/TXT/?qid=1623335154975&uri=CELEX%3A52021PC0206

Xisca Borrás

Author

Hugo Kent-Egan

Author